In our recent pillar article on teleoperation data annotation, we explored why VLA models and humanoid robots demand more than 10,000 hours of annotated trajectories to scale. But one question kept surfacing from robotics teams we work with at SyncSoft AI: what happens when you cannot collect enough real-world data? The answer — and the field's most promising shortcut — is simulation. Yet simulation data on its own is dangerously brittle once a robot meets the real world. The bridge between those two realities is precision data annotation, and in 2026, it has become the single most underinvested link in the physical AI supply chain.

The sim-to-real gap — the performance drop robots experience when policies trained in simulation encounter real-world physics, lighting, texture, and noise — remains the most serious bottleneck in scalable robot deployment. A 2026 Macgence analysis found that teams relying purely on synthetic data saw performance degrade by 30 to 60 percent on contact-rich manipulation tasks, even when domain randomization was applied aggressively. The gap is not a software bug you can patch; it is a data quality problem, and it requires human-in-the-loop annotation at multiple pipeline stages to solve.

Why Synthetic Data Alone Fails — and Where Annotation Fixes It

Modern simulators like NVIDIA Isaac Sim, MuJoCo, and Gazebo can render photorealistic environments and run physics engines at thousands of times real-time speed. Teams generate millions of synthetic frames overnight — a volume that would take years of teleoperation to match. The appeal is obvious: cheap, fast, endlessly diverse data.

The problem is distributional mismatch. Simulated textures do not capture the micro-irregularities of real surfaces. Simulated lighting does not reproduce the color-temperature drift of warehouse LEDs at different times of day. Simulated contact dynamics do not model the deformation of a soft-plastic bottle when gripped at varying torques. When a VLA model trained on clean simulation data encounters these realities, its grasp success rate can collapse from 95 percent in the simulator to below 40 percent on a physical bench — a finding corroborated by multiple ICRA 2026 workshop papers.

This is where targeted annotation transforms the equation. Rather than discarding synthetic data entirely, leading robotics teams now use a hybrid pipeline: generate large volumes of simulated trajectories, then annotate a carefully selected subset of real-world data to create alignment anchors. These anchors teach the model which simulation features transfer and which must be corrected. At SyncSoft AI, we call this process Synthetic-Real Alignment Annotation, and it has become one of our fastest-growing service lines.

The Four-Layer Annotation Stack for Sim-to-Real Transfer

Bridging the sim-to-real gap is not a single annotation task — it is a layered workflow. Based on our experience building robotics data pipelines for physical AI teams across the United States and Europe, we have formalized a four-layer annotation stack that addresses each failure mode independently.

Layer one is Domain Randomization Validation. When simulation engineers apply domain randomization — varying textures, lighting, object positions, camera angles — they need human annotators to verify that the randomized frames still contain physically plausible scenarios. Our annotators flag and label implausible frames: objects floating in mid-air, impossible shadow directions, materials with unrealistic reflectance. This curation step ensures the model trains on synthetic diversity that actually exists in the real distribution, rather than hallucinated noise that degrades performance.

Layer two is Sensor Fusion Alignment. Real robots capture data from RGB cameras, depth sensors, LiDAR, IMUs, and force-torque sensors simultaneously. In simulation, each modality is rendered perfectly synchronized. In reality, sensors have different latencies, noise profiles, and failure modes. Our annotators align real multimodal captures frame-by-frame, labeling temporal offsets, marking sensor dropout events, and creating ground-truth alignment maps that the model uses to learn robust fusion. We process RGB-D, LiDAR point clouds, and IMU logs as a unified pipeline — handling terabyte-scale datasets with our Vietnam-based team at 40 to 60 percent lower cost than comparable US or European services.

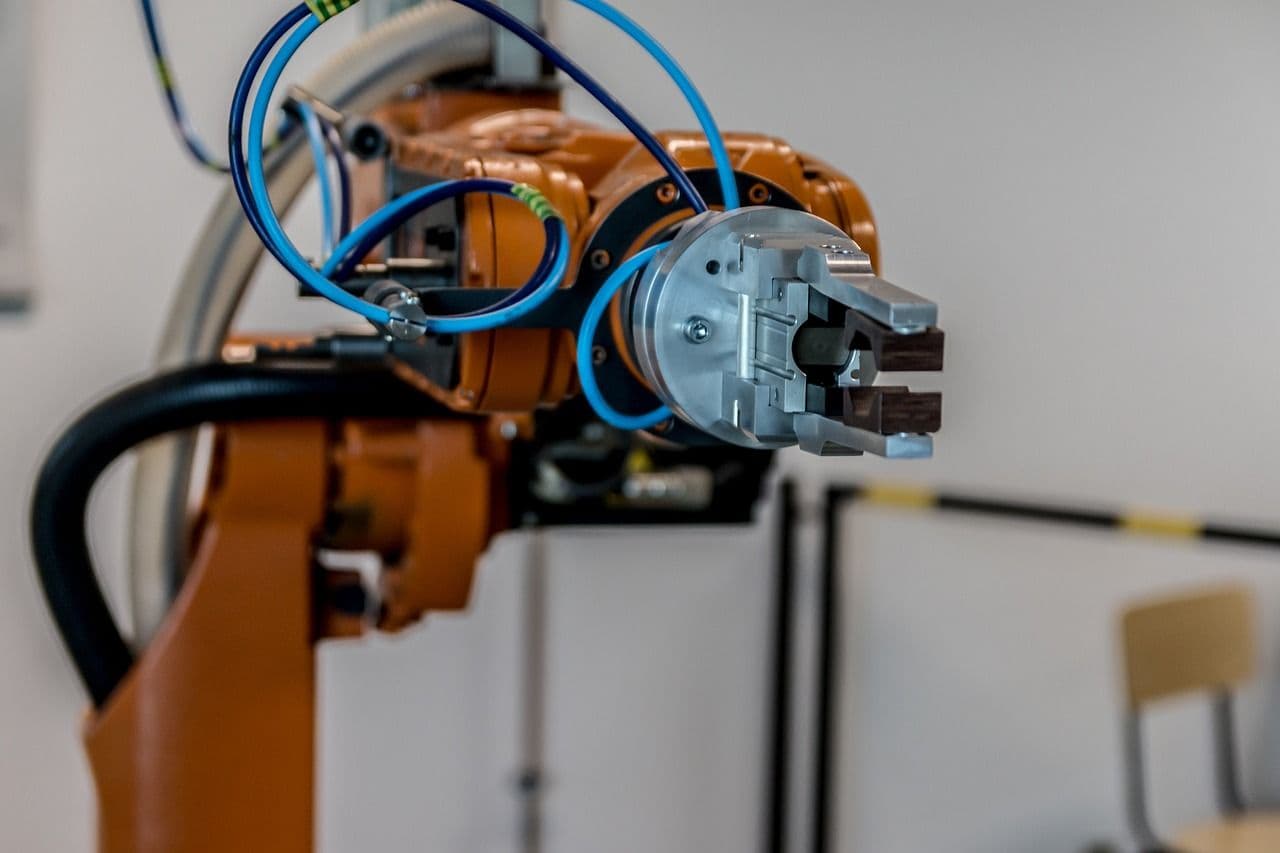

Layer three is Contact and Manipulation Labeling. The most fragile part of sim-to-real transfer is physical contact: grasping, pushing, inserting, pouring. Simulated contact dynamics are notoriously inaccurate. Our annotators label real-world manipulation trajectories with fine-grained contact events — grasp initiation, contact surface, slip detection, force direction, release timing — using a proprietary annotation schema co-developed with three humanoid robotics clients. These labels provide the supervisory signal that teaches VLA models to correct their simulated contact priors.

Layer four is Failure Mode Annotation. Every real-world deployment generates failure trajectories — drops, collisions, missed grasps, path deviations. Most teams discard this data. We treat it as gold. Our annotators classify each failure by root cause (perception error, planning error, execution error, environmental surprise), label the precise frame where the failure cascade begins, and tag the environmental context. This annotated failure corpus becomes the highest-leverage fine-tuning dataset for closing the sim-to-real gap, because it teaches the model exactly where simulation assumptions break down.

Quality Assurance That Matches Robotics-Grade Requirements

Annotation for sim-to-real bridging demands higher accuracy than standard computer vision labeling. A mislabeled bounding box in an image classification dataset reduces accuracy by a fraction of a percent. A mislabeled contact event in a manipulation trajectory can cause a robot arm to crush a component or drop a fragile object. The cost of annotation errors in physical AI is measured not in model metrics but in hardware damage and safety incidents.

SyncSoft AI applies a multi-layer QA process specifically tuned for robotics data. Every annotated trajectory passes through four checkpoints: the primary annotator, a peer reviewer with domain expertise, a QA lead who validates against project-specific rubrics, and an automated validation layer that checks geometric consistency, temporal coherence, and label distribution statistics. We maintain 95 percent or higher accuracy targets with inter-annotator agreement tracking that flags drift before it propagates. For manipulation labeling, we add a physics-plausibility check: does the annotated contact sequence obey basic Newtonian constraints? If not, it gets re-reviewed.

This QA rigor is non-negotiable for safety-critical robotics applications. When a humanoid robot operates in a warehouse alongside human workers, the training data that shaped its behavior must be audit-ready. Our QA documentation provides full annotation provenance — who labeled what, when, under which guidelines, and with what confidence — giving our clients the traceability they need for regulatory compliance and internal safety reviews.

Real-World Impact: From AGIBOT WORLD to Production Deployments

The scale of the sim-to-real annotation challenge is growing exponentially. AGIBOT WORLD, released in early 2026, spans diverse real-world environments including commercial spaces and homes, with hundreds of hours of real-world robot interaction data. The Humanoid Everyday dataset aggregates 10,300 trajectories and over three million frames across 260 tasks with multimodal annotations including RGB, depth, LiDAR, and tactile inputs. RoboMIND features 107,000 real-world demonstration trajectories spanning 479 distinct tasks across multiple robot embodiments.

Every one of these datasets requires annotation at a scale and precision that overwhelms in-house teams. The ICRA 2026 VLA Pipeline workshop competition — requiring participants to train on over 10,000 hours of data and evaluate on real robot hardware — has made it clear that annotation capacity is now a competitive bottleneck. Teams that can annotate faster, more accurately, and more cost-effectively will ship robots that actually work outside the lab.

SyncSoft AI has built dedicated robotics annotation teams that scale on demand. Our flexible pricing — per-task, per-hour, or dedicated team models — means a startup burning through its seed round and a Fortune 500 manufacturer both get the annotation throughput they need without over-committing on headcount. Our Vietnam-based workforce delivers this at a price point that makes large-scale sim-to-real annotation projects financially viable for the first time.

The 2026 Toolkit: What Leading Teams Are Annotating Now

Based on our current project portfolio, we see five annotation workstreams dominating sim-to-real robotics in 2026. First, 3D point cloud segmentation for warehouse navigation — labeling traversable surfaces, obstacle boundaries, and dynamic object predictions in LiDAR scans. Second, depth map quality scoring — annotating RGB-D frames for depth estimation accuracy, marking regions where stereo matching fails due to reflective or transparent surfaces. Third, tactile signal labeling — classifying force-torque sensor readings during manipulation tasks to build tactile prediction models. Fourth, language-grounded action annotation — pairing natural language instructions with trajectory segments for VLA model training. Fifth, sim-real correspondence mapping — explicitly labeling which simulated features match real-world observations and which diverge, creating the supervisory signal for domain adaptation layers.

Each of these workstreams requires annotators who understand both the data modality and the downstream robotics application. This is not generic image labeling — it is specialized, domain-informed annotation that directly determines whether a two-million-dollar humanoid robot can reliably pick up a coffee cup without shattering it.

Positioning Your Robotics Program for Sim-to-Real Success

The sim-to-real gap is not closing on its own. Better simulators help, but they do not eliminate the need for real-world data annotation. Foundation models help, but they are only as good as the data that fine-tunes them for your specific robot, your specific environment, and your specific tasks.

If you are building a robotics product in 2026, your annotation strategy is your deployment strategy. Teams that invest in structured, multi-layer sim-to-real annotation pipelines — domain randomization validation, sensor fusion alignment, contact labeling, and failure mode analysis — ship robots that work. Teams that skip this step ship demos.

SyncSoft AI specializes in building exactly these pipelines. With scalable data processing across LiDAR point clouds, camera feeds, sensor fusion data, and IMU logs, a multi-layer QA process that targets 95 percent or higher accuracy, competitive Vietnam-based pricing at 40 to 60 percent below US and EU rates, and rapid team scaling from pilot to production, we help robotics teams close the sim-to-real gap without burning their engineering bandwidth on data work. Whether you need 1,000 annotated trajectories for a proof of concept or 100,000 for a production VLA model, our team is ready to deliver.

The robots of 2026 are only as capable as the data they train on. Make sure your data is ready for the real world.